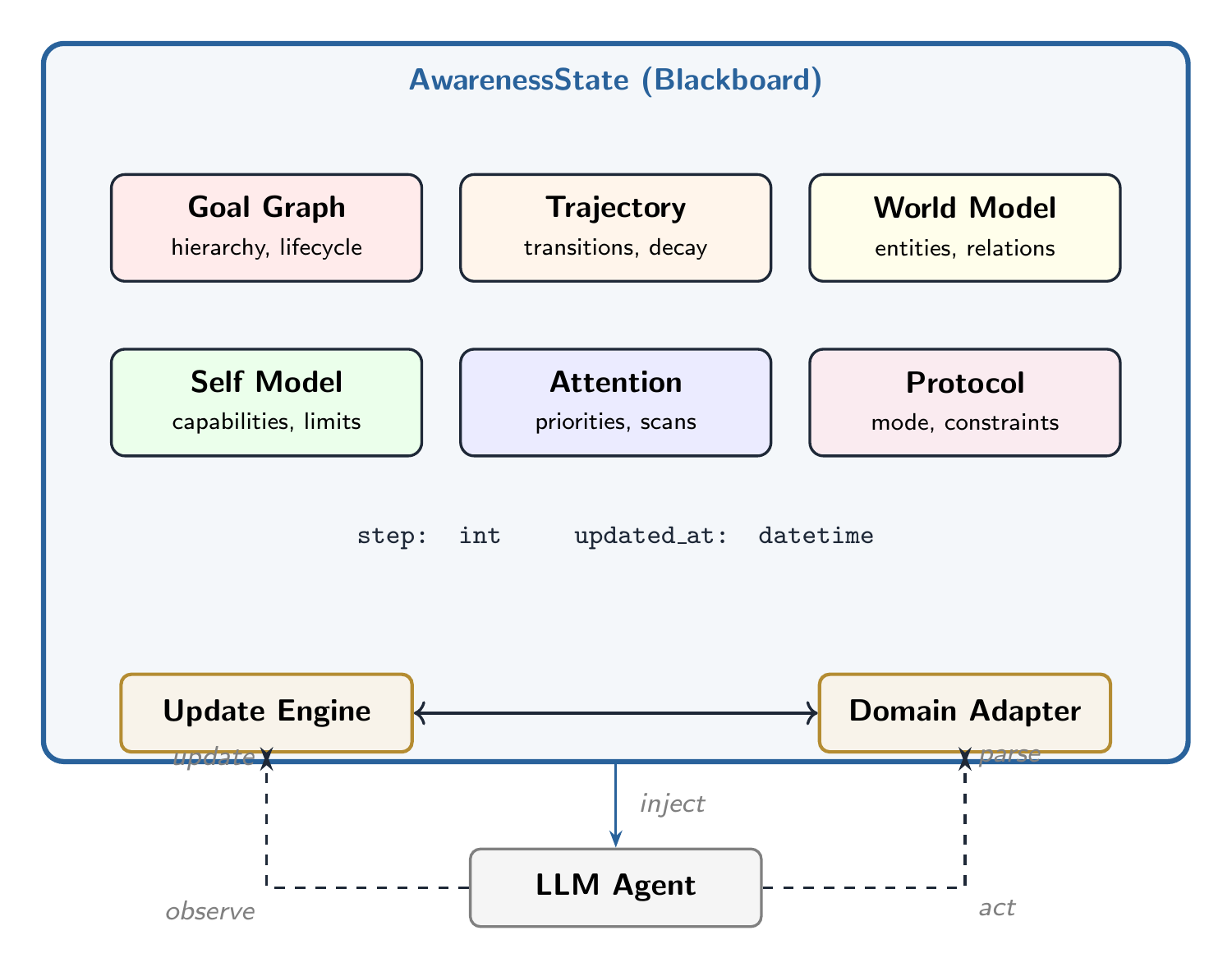

Large language model (LLM) agents operate under a fundamental constraint: each inference call is stateless. Existing approaches to agent memory treat persistence as a storage problem. We reframe it as a cognitive architecture problem. We introduce SAGEN (Situational Awareness for Generative Agents), a modular framework that maintains structured, evolving awareness state across agent interactions through six interoperating cognitive modules: Goal Graph, Trajectory, World Model, Self Model, Attention Priorities, and Interaction Protocol. SAGEN operates via an Observe–Update–Inject loop coordinated by a domain-agnostic engine and domain-specific adapters. We present the architecture, formal specification, a reference implementation, and a proof-of-concept evaluation in the conversational domain. We release SAGEN as open-source software.

Introduction

The deployment of large language models as interactive agents has exposed a structural gap between model capability and operational coherence. A model that can reason about complex plans within a single context window routinely loses the thread of those same plans across sequential interactions. This is not a failure of intelligence. It is a failure of architecture.

The dominant paradigm treats agent memory as information retrieval: store facts, embed them, retrieve relevant chunks at query time. RAG and its derivatives address the question “What does the agent know?” They do not address: What is the agent trying to do? What just changed? What should it pay attention to? What has it tried before?

These are questions of situational awareness — a concept well-studied in human factors research but underexplored in LLM agents.

The core design principles are:

- Modularity. Six cognitive modules that can be composed, extended, or replaced independently.

- Domain agnosticism. A plugin-based adapter pattern that separates domain-specific perception from domain-general state management.

- Compression over accumulation. Inspired by ACT-R’s memory decay, prioritize recent, salient, and failure-associated state.

- Injection-native. State rendered as compact, structured text designed for LLM context windows.

Architecture

SAGEN’s awareness state is organized as a blackboard: a shared data structure that multiple cognitive modules read from and write to. The unified state object, AwarenessState, contains six modules and two coordination fields.

Module 1: Goal Graph

The Goal Graph maintains a directed acyclic graph of agent objectives. Each goal has a lifecycle (active, completed, abandoned, blocked, deferred) and a provenance (explicit, inferred, emergent, or spawned).

| Field | Type | Description |

|---|---|---|

id | string | Unique 8-character identifier |

description | string | Natural language goal statement |

status | GoalStatus | Lifecycle state (5 values) |

source | GoalSource | Provenance (4 values) |

priority | float [0,1] | Urgency/importance score |

parent_id | string? | Parent goal for hierarchy |

depends_on | list[string] | Blocking dependency IDs |

completion_criteria | string? | Verifiable exit condition |

Module 2: Trajectory

Records state transitions as a temporal sequence — the agent’s episodic memory. Each transition is typed: progress, reversal, pivot, discovery, external event, failure, or branch. The module implements ACT-R-inspired compression: recent transitions are detailed, routine progress fades, and failures/reversals/discoveries are sticky.

Module 3: World Model

An entity-relationship graph of the agent’s environment. Entities have types, mutable state, and affordances. The World Model also tracks assumptions (unverified beliefs) and unknowns (identified but unanswered questions) — giving the agent explicit access to its own epistemic boundaries.

Module 4: Self Model

The agent’s understanding of its own capabilities, limitations, resources, authority boundaries, and failure history. The can_i(action) method provides a pre-flight check across three dimensions: capability, authorization, and resourcing.

Module 5: Attention Priorities

A priority queue of threats, opportunities, anomalies, and transitions. Each item has an urgency score and optional TTL. The module also stores persistent scan patterns — watchlist templates defined by the domain adapter.

Module 6: Interaction Protocol

The operational contract: communication style, output format, collaboration mode (autonomous, supervised, collaborative, advisory), escalation rules, and hard constraints.

Unified State and the Tick

All six modules reside on the AwarenessState blackboard alongside a global step counter and timestamp. Formally, the awareness state at step is:

where is the Goal Graph, the Trajectory, the World Model, the Self Model, the Attention Priorities, and the Interaction Protocol.

The Update Engine

The Update Engine coordinates the three-phase Observe–Update–Inject (OUI) loop that transforms raw observations into structured state and then into LLM-consumable context.

Phase 1: Observe

The adapter’s parse_observation method receives raw input and the current state, returning a structured dictionary of entities, goals, attention items, assumptions, unknowns, and trajectory events. This phase is entirely domain-specific.

Phase 2: Update

The engine applies parsed observations using upsert semantics for entities and append semantics for goals, attention items, and lists. Expired attention items are removed based on TTL. This phase is domain-agnostic.

Phase 3: Inject

The adapter renders the current state as a compact text block sized to a token budget. The injection string is structured but natural-language-readable, wrapped in <sagen> tags.

<sagen>

ACTIVE GOALS:

[explicit] Learn Python (p=0.7)

[explicit] Build a web scraper (p=0.7)

[inferred] Answer: What library for scraping? (p=0.6)

ATTENTION:

[opportunity] Callback to earlier topic

[transition] Topic shift: {'cooking'} -> {'Python'}

ACTIVE TOPICS: Python, web scraping

TRAJECTORY:

[progress] Continuing: {'Python', 'web scraping'}

[pivot] Pivoted from {'cooking'} to {'Python'}

</sagen>Domain Adapters

Each adapter implements six methods that encapsulate all domain-specific logic:

| Method | Responsibility |

|---|---|

domain_name | Returns the domain identifier string |

define_entity_types() | Declares entity types the domain recognizes |

define_relationship_types() | Declares valid relationship types |

define_scan_patterns() | Provides persistent attention scan templates |

parse_observation() | Transforms raw input into structured updates |

format_for_injection() | Renders state as LLM-consumable text |

define_protocol_defaults() | Sets default interaction protocol values |

Cross-Domain Comparison

| Dimension | Conversation Adapter | Coding Adapter |

|---|---|---|

| Entity types | 6 (person, topic, concept, reference, emotion, preference) | 8 (file, library, error, endpoint, service, concept, function, config) |

| Scan patterns | Topic shift, emotional escalation, callback, implicit goal | Error recurrence, scope creep, dependency conflict, solution regression |

| Primary threats | Frustration, confusion | Blocking errors, regressions |

| Trajectory emphasis | Pivots, callbacks | Failures, discoveries, branches |

The two adapters share zero entity types (except “concept”), zero scan patterns, and produce domain-appropriate injection formats — yet both run on the identical engine and schema without modification.

Evaluation

State Evolution (Conversation Domain)

| Step | Event | Goals | Entities | Attn | Trajectory |

|---|---|---|---|---|---|

| 1 | Goal creation | 3 | 2 | 0 | 1 (progress) |

| 2 | Topic pivot | 5 | 4 | 1 (transition) | 2 (+pivot) |

| 3 | Callback | 6 | 4 | 2 (+opportunity) | 3 (+progress) |

| 4 | Frustration | 7 | 5 | 3 (+threat) | 4 (+progress) |

Baseline Comparison

| Metric | Raw Buffer | Rolling Summary | SAGEN |

|---|---|---|---|

| Approx. tokens | 92 | 43 | 176 |

| Coverage score (/20) | 2.1 | 4.1 | 19.7 |

| Coverage (%) | 10.5 | 20.5 | 98.5 |

| Info density (cov/100 tok) | 2.28 | 9.59 | 11.23 |

| Fully captured | 1 | 4 | 20 |

| Not captured | 16 | 13 | 0 |

SAGEN captures 98.5% of evaluated information dimensions vs. 20.5% for rolling summaries and 10.5% for raw buffers. The key finding is not SAGEN’s absolute score but the structural gap: 16 of 20 dimensions are captured by none of the baselines. These are capabilities — goal hierarchy, typed transitions, epistemic boundary tracking, urgency scoring — that require explicit architectural support.

Qualitative Response Comparison

Turn 3 (Callback): Under rolling summary context, the LLM answers the factual question competently but treats it as standalone. Under SAGEN injection, it recognizes the callback, connects it to the original goal, and calibrates its response to the user’s stated skill level.

Turn 4 (Frustration): The summary-context response jumps directly to troubleshooting. The SAGEN-context response first acknowledges the user’s emotional state, calibrates its tone, and adopts a patient, step-by-step approach.

Closed-Loop Evaluation

LLM-generated perception achieves 0.94 alignment with hand-crafted analysis across all four turns. All key cognitive dynamics (pivot detection, callback recognition, frustration monitoring) are preserved regardless of whether analysis is hand-crafted or LLM-generated.

State Serialization

Split-session experiment: Session 1 processes turns 1–2, state is serialized to JSON (4.7 KB), Session 2 processes turns 3–4 from restored state. The restored engine produces exactly identical state and injection output to continuous execution. The blackboard is the state, and the state is the JSON.

Discussion

Classification as Infrastructure

SAGEN’s six-module decomposition is not a neutral description of cognition. It is a taxonomic commitment that determines what the agent can represent and therefore what it can reason about. An agent without a Trajectory module cannot distinguish a topic pivot from a topic abandonment. An agent without typed Attention cannot allocate urgency differentially. The taxonomy is not scaffolding; it is load-bearing structure.

Limitations

- No learning. SAGEN maintains state but does not learn from it in the ML sense.

- Perception cost. Each observation requires an additional inference call.

- Single-domain assumption. One adapter at a time; multi-domain composition is not yet specified.

- Limited evaluation scope. Single 4-turn scenario; systematic end-to-end evaluation planned.