Plan Equivalence

Two inference plans are equivalent if they produce identical token sequences under identical model and constraint semantics.

Definition (-Equivalence)

Two plans are -equivalent if logits differ by at most over the valid candidate set and produce identical decoded outputs.

Lemma: Dense and Sparse Equivalence

Let denote the valid token set. Dense masked scoring:

Sparse scoring:

Then:

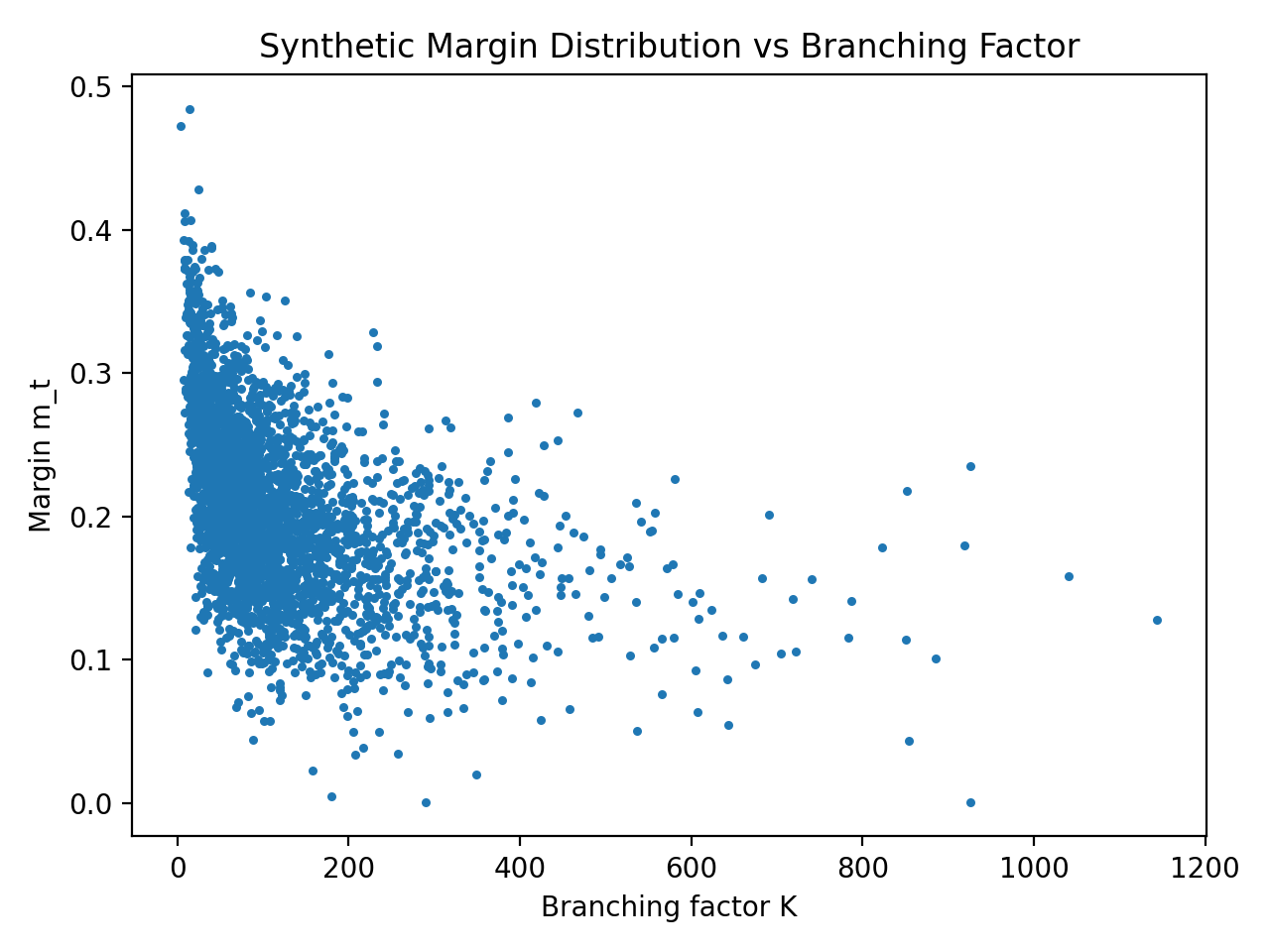

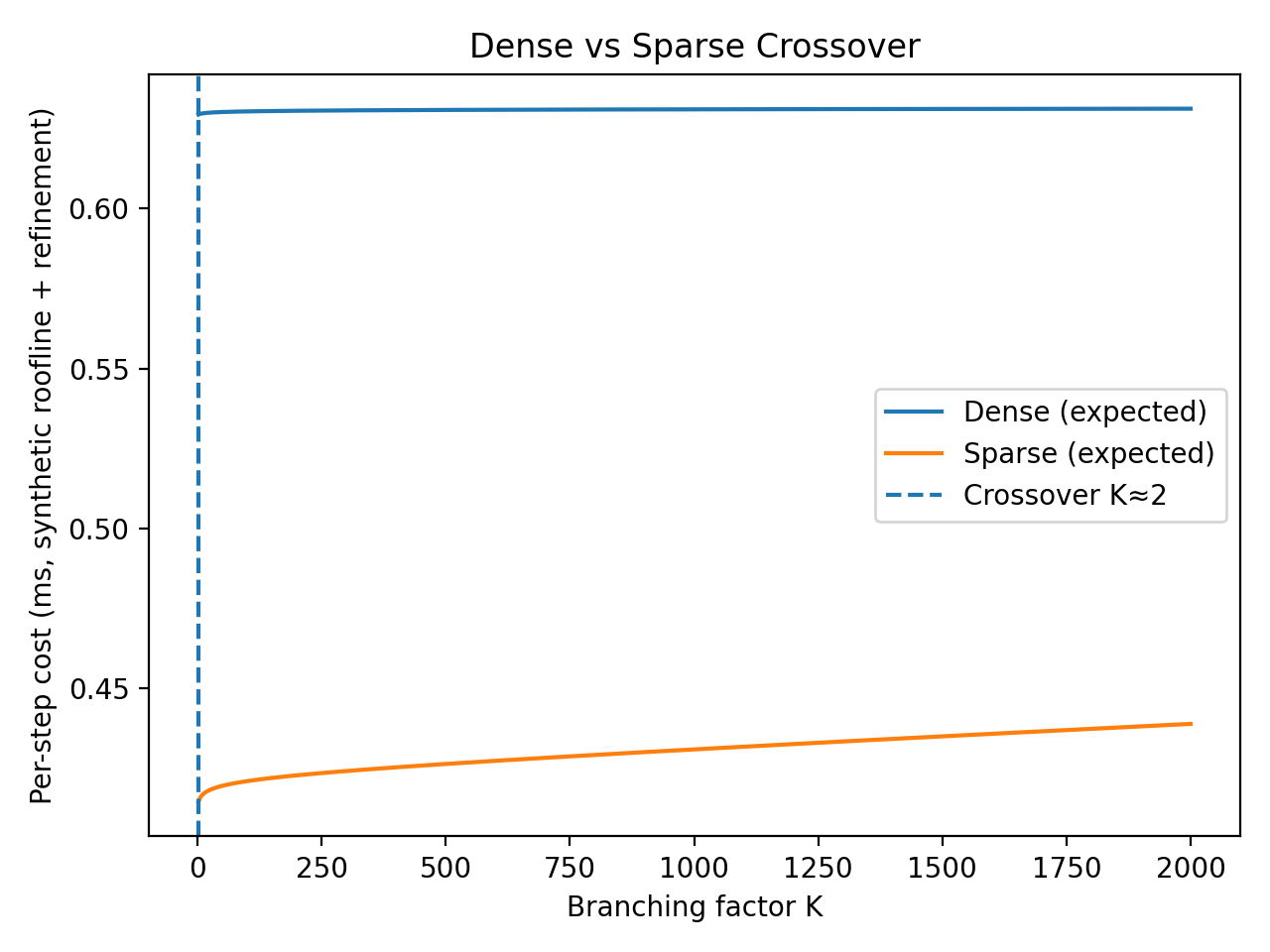

Roofline Regime Analysis

Dense head compute:

Sparse head compute:

Sparse dominates when:

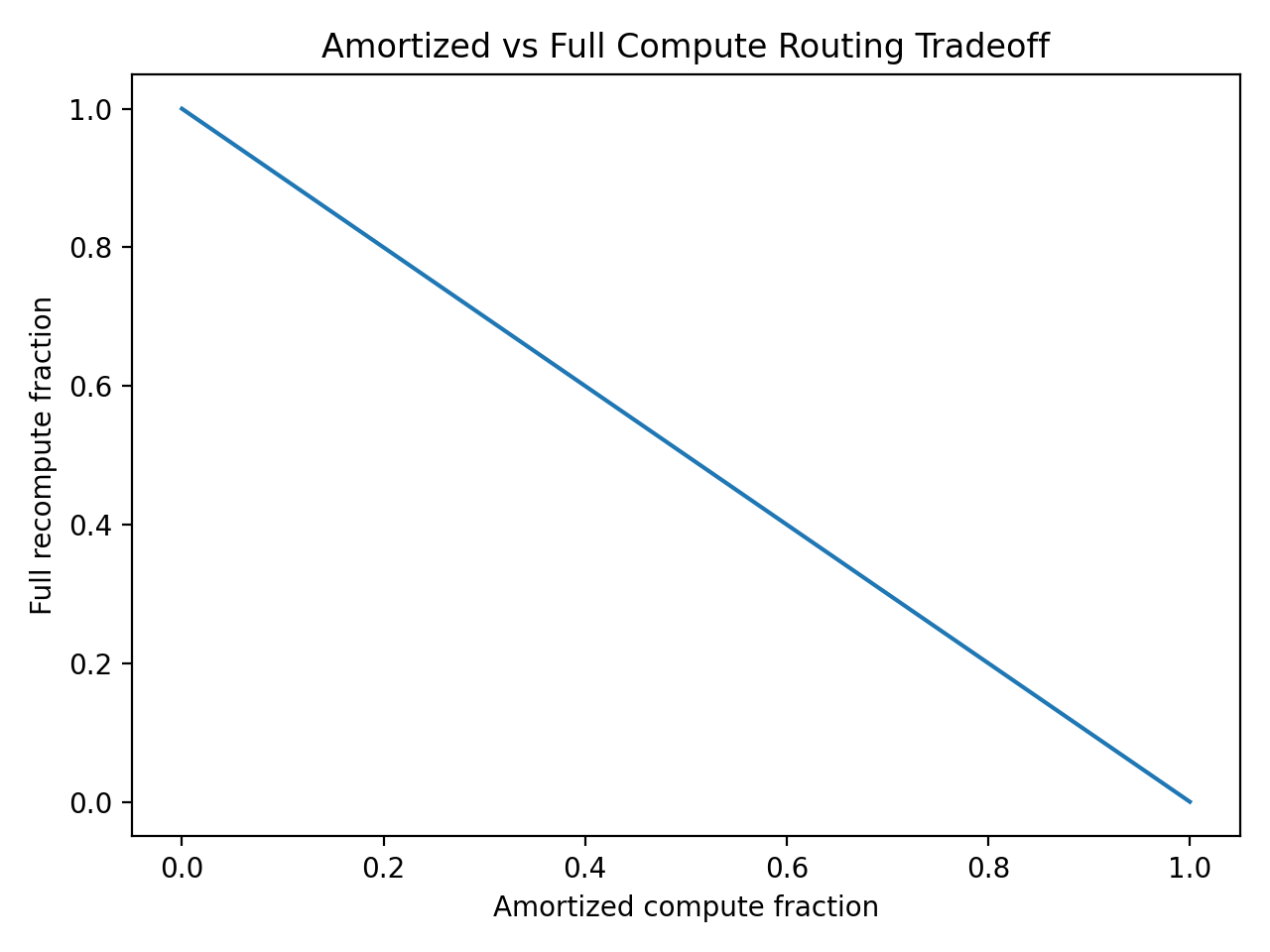

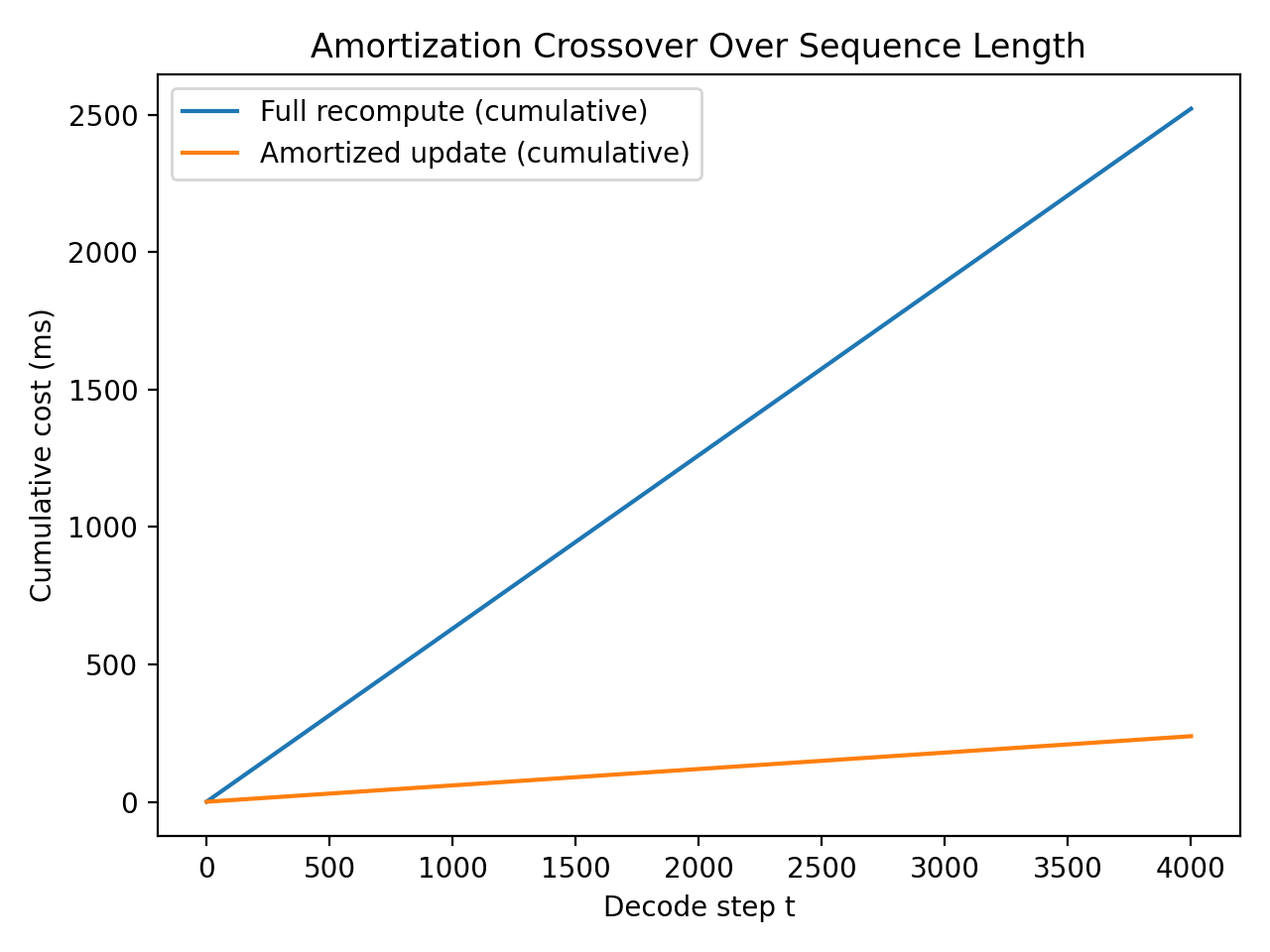

Amortized Query Hypothesis

We model decoder state as:

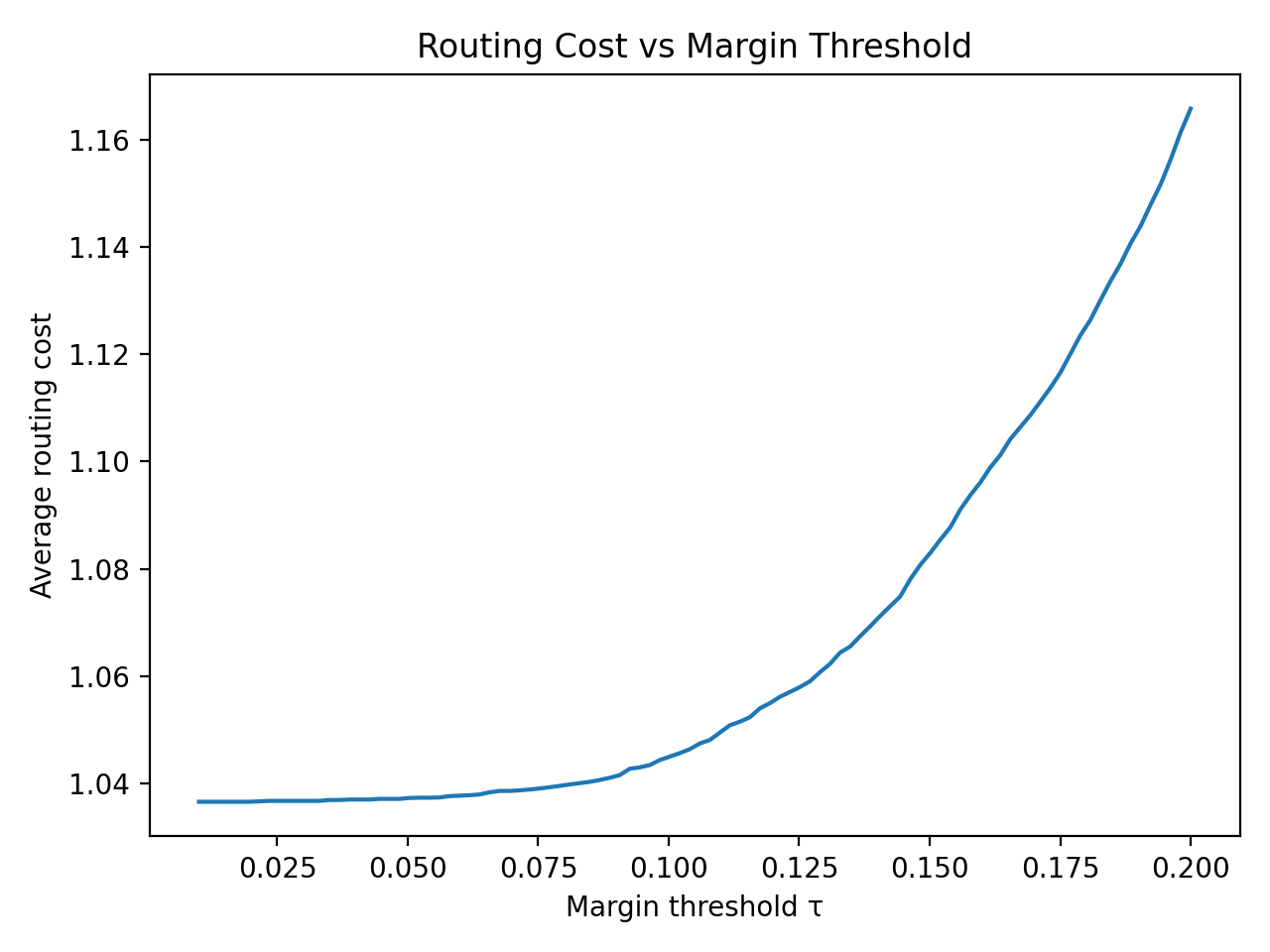

Full transformer recomputation may provide limited incremental information when margins are large. Define margin:

Routing decision — refine if:

Otherwise use amortized scoring.

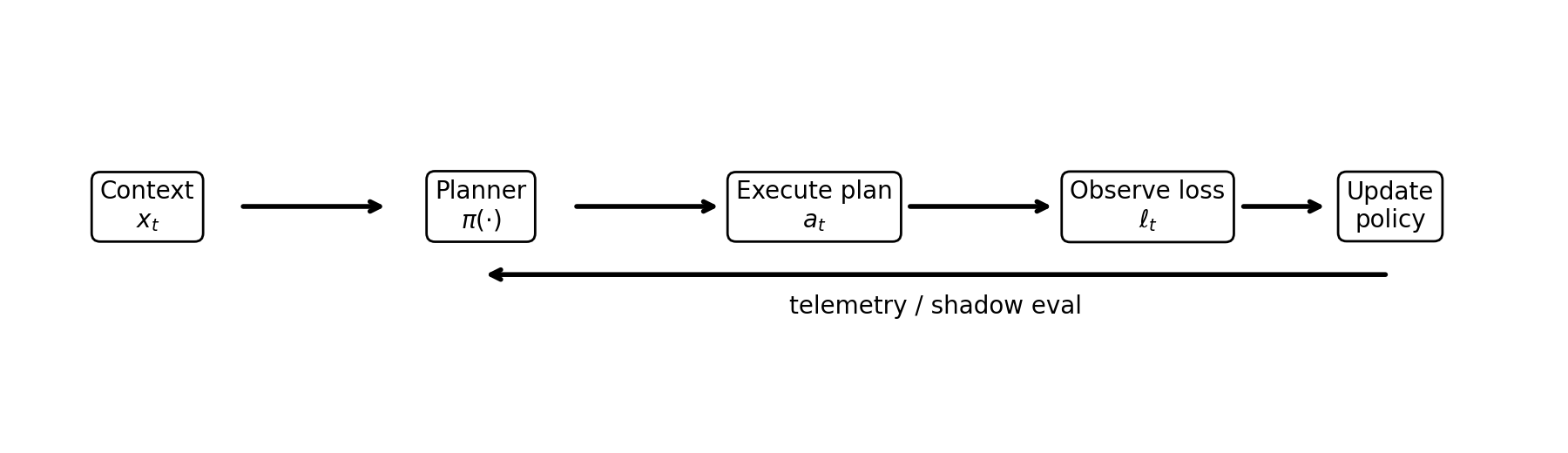

Planner Routing as a Contextual Bandit

At decoding step , the planner observes a context vector and selects an execution plan . We define a scalar loss:

and seek to minimize .

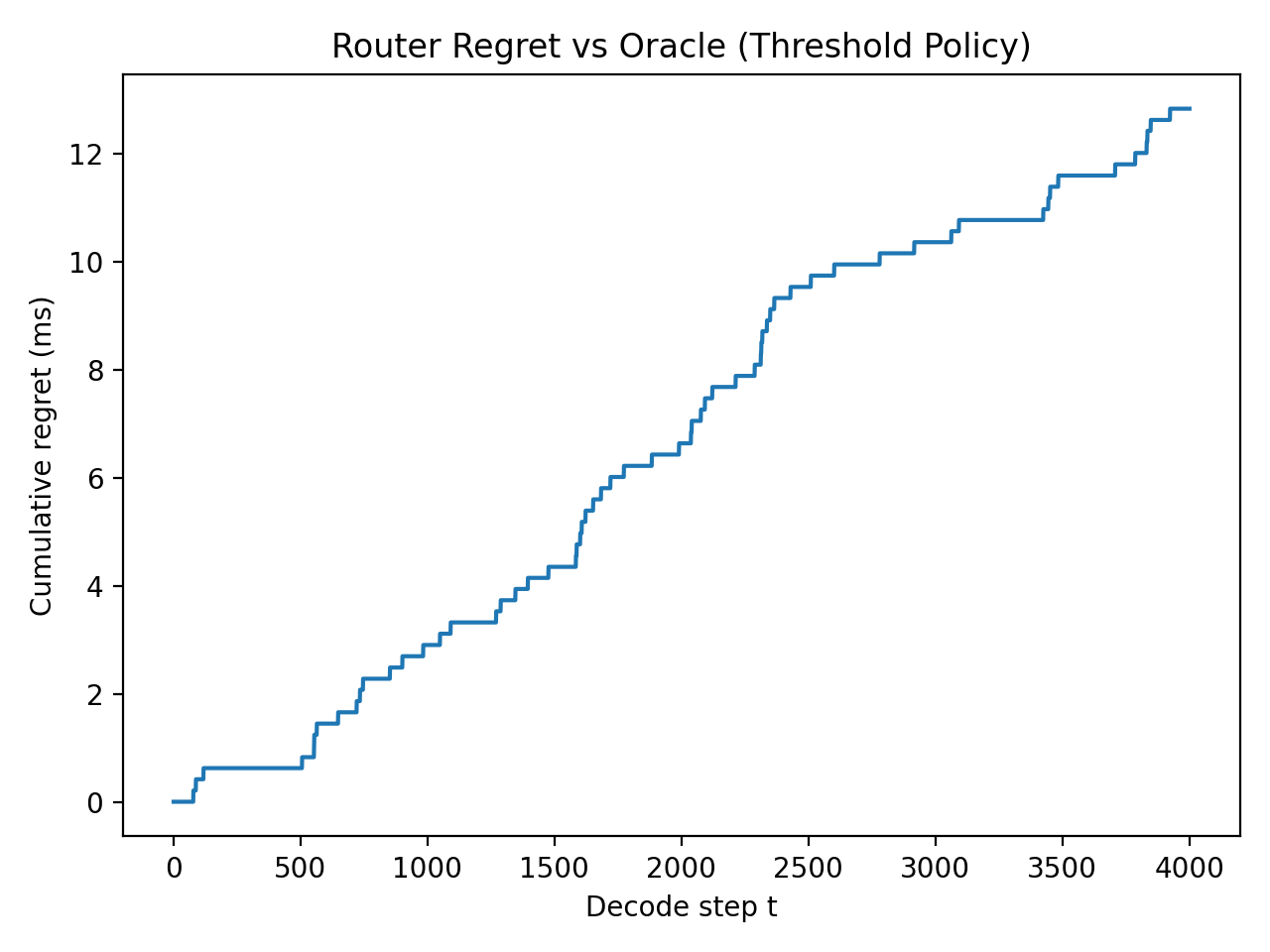

Oracle and Regret

Define the per-step oracle action:

and dynamic regret:

For stationary regimes, define the best fixed plan:

with static regret:

Linear Contextual Bandit

Assume realizability: , with . LinUCB/linear Thompson sampling achieves sublinear regret of the form:

where and hides logarithmic factors.

Nonstationarity and Variation Budget

To capture policy churn, define a variation budget:

Sliding-window or restart-based bandits can achieve dynamic regret bounds of the form:

under standard boundedness assumptions.

Connection to Margin-Based Routing

The threshold router from the amortization section is a finite policy class when is discretized. This enables expert-style learning over with regret for candidate thresholds.

Compiler Integration: Plan Selection in MLIR / StableHLO

Modern inference stacks already compile model graphs through MLIR and StableHLO. LLM-QP can therefore be implemented as a compiler pass that introduces multiple equivalent execution plans and selects among them using a cost model.

Logical vs Physical Plans

We define a logical operator: DecodeStep(query, constraint_state) which expresses the semantics of a single constrained decoding step. Physical implementations include:

- Dense projection head

- Sparse adjacency scoring

- Amortized query update

- Amortized update with rerank

- Full recomputation (refinement)

Plan Expansion Pass

During compilation the planner expands the logical node into candidate physical plans:

DecodeStep -> DenseHead -> SparseHead -> AmortizedHead -> AmortizedHead + Rerank

Cost Model

Each plan is annotated with an estimated cost covering kernel latency estimates, memory bandwidth usage, refinement probability, and device utilization. The cost function follows:

Rewrite Rule

if cost(SparseHead) < cost(DenseHead):

use SparseHead

else:

use DenseHeadIn practice this is implemented using MLIR pattern rewrites or XLA custom calls.

Runtime Adaptation

Runtime telemetry (margins, branching factor, cache state) feeds back into the cost model. The compiler therefore emits a small routing kernel that chooses the implementation at runtime. This hybrid compile-time / runtime planning architecture allows LLM-QP to reuse existing compiler infrastructure while enabling adaptive execution.

Visualizations

Planner Pseudocode

LinUCB for Plan Selection

Let and feature dimension . Maintain per-action matrices and vectors initialized as , . At each step :

- Observe context .

- For each action , estimate and compute:

- Choose (loss-minimization).

- Execute plan , observe loss .

- Update and .

Expert-Over-Thresholds Routing

Discretize thresholds and define policies . Maintain weights initialized uniformly. At each step :

- Observe context and compute each expert’s suggested action .

- Sample an expert index proportional to weights (or pick for greedy).

- Execute , observe loss .

- Update weights using exponential weighting:where is an unbiased loss estimate.

This realizes regret against the best threshold policy in hindsight under standard assumptions.

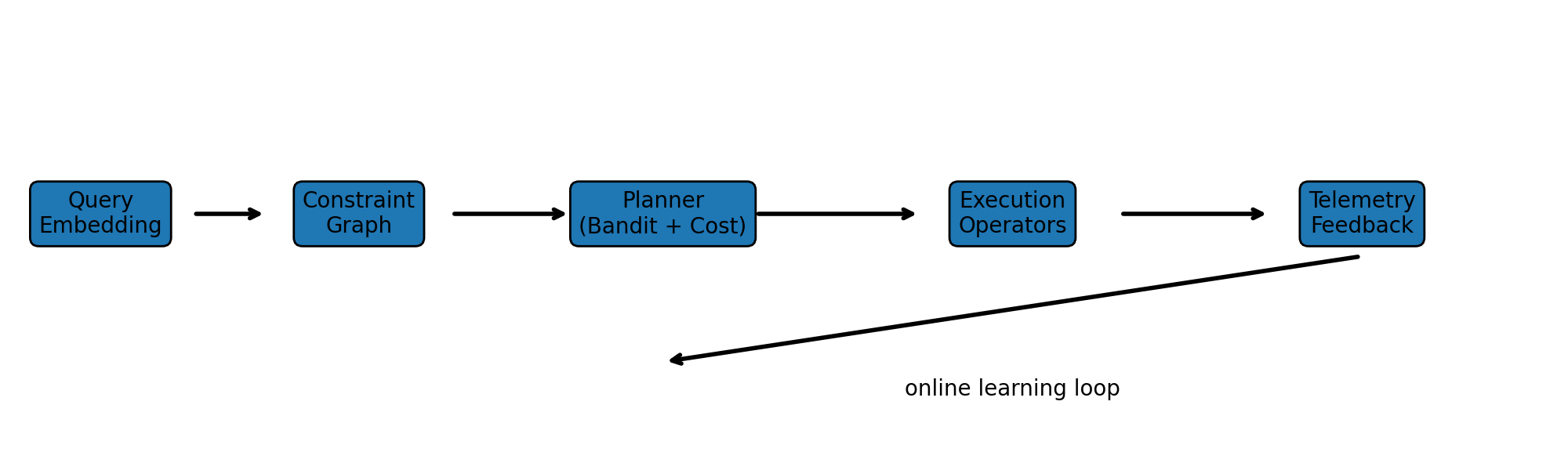

End-to-End System Architecture

The pipeline operates as follows:

- Query embedding produces a representation of the decoding state.

- The constraint graph defines valid token transitions.

- The planner selects an execution plan using contextual signals.

- The chosen operator executes the step.

- Telemetry feeds back to update routing decisions.

Minimal Reproducible Benchmarks

We provide a portable benchmark harness (synthetic, deterministic) that instantiates the cost models and routing policies used throughout the paper. The suite outputs: (i) dense vs sparse crossover vs , (ii) amortized vs full recomputation crossover vs decode length, and (iii) router regret relative to an oracle policy (threshold router).